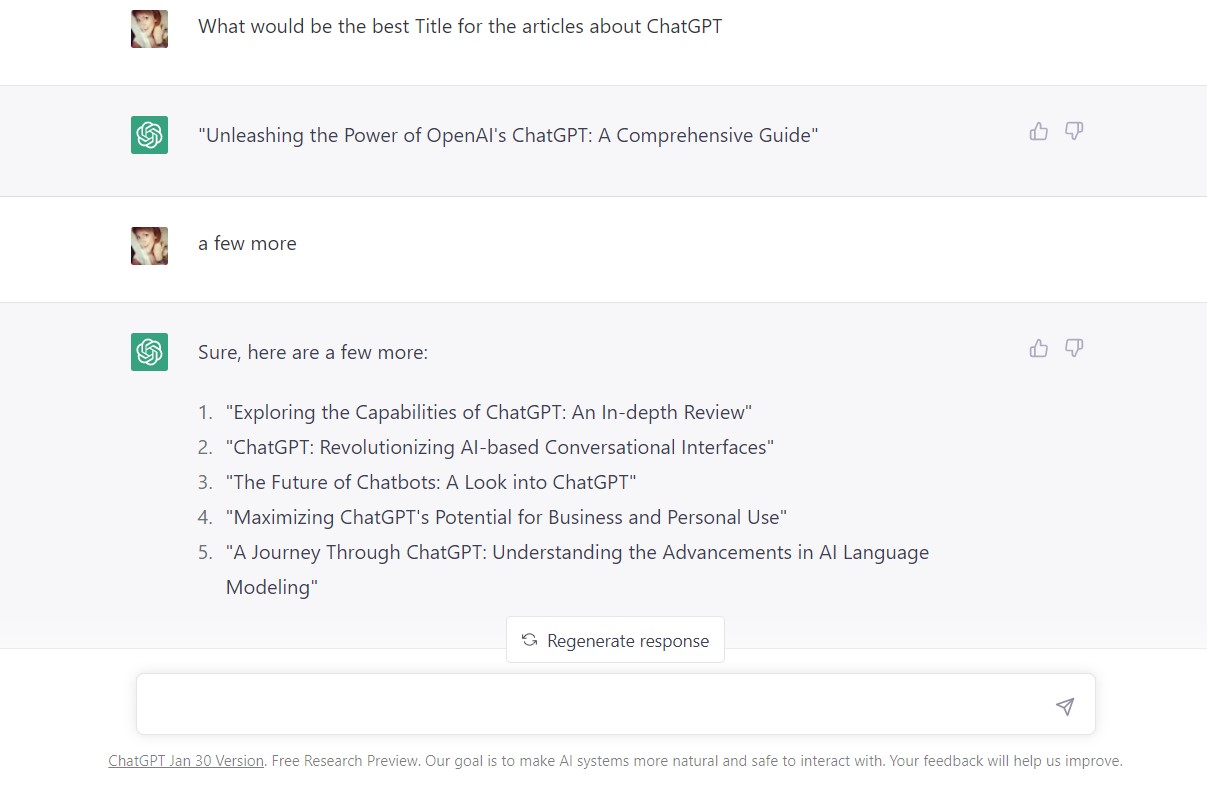

What is ChatGPT and How Can It Be Used?

OpenAI has launched a long-form question-answering AI called ChatGPT that answers complicated queries conversationally. This is what ChatGPT is and why it may be an essential tool since current search engines. It’s a game-changing innovation because it’s been trained to understand what humans mean when they ask questions.

Many users are impressed by its capacity to produce human-quality reactions and responses, raising the possibility that it might one day disrupt how humans interact with computers and transform how information is acquired.

ChatGPT: What Is It?

OpenAI developed ChatGPT, a chatbot using a large language model based on GPT-3.5. It is extraordinary in its capacity to communicate in conversational interaction and deliver answers that look remarkably human. Large language models perform the task of perceiving the next word in a sequence of words.

Reinforcement Learning with Human Feedback (RLHF) is an additional training layer that leverages human feedback to help ChatGPT learn to obey orders and produce human-satisfactory answers.

Who Developed ChatGPT?

OpenAI, a San Francisco-based artificial intelligence organization, developed ChatGPT. The non-profit OpenAI Inc. is the for-profit OpenAI LP’s parent company.

OpenAI is well-known for its DALL • E deep-learning model, which creates visuals from written instructions known as prompts. Sam Altman, who formerly served as Y Combinator’s president, is the CEO. Microsoft has invested $1 billion as a partner and investor. They collaborated to create the Azure AI Platform.

Large Language Models

To put it simply, ChatGPT is a significant language model (LLM). For precise prediction of the next word in a sentence, large language models (LLMs) are trained using substantial volumes of data.

It was revealed that increasing the amount of data boosted the language models’ capacity to accomplish more. LLMs function similarly to autocomplete, but on a much larger scale, predicting the next word in a series of talks within a sentence and the following sentences. This capability enables them to generate paragraphs and entire web pages.

However, LLMs have limitations because they may not always grasp what a person wants, and here is where Reinforcement Learning with Human Feedback (RLHF) training, which is used in ChatGPT, outperforms the state-of-the-art.

Who Trained ChatGPT and how?

ChatGPT was trained using GPT-3.5 and fed large quantities of data collected from the internet, including Reddit forums, to assist it in understanding conversation and creating human responses.

Reinforcement Learning with Human Feedback was used to train ChatGPT, so the AI would know how to respond appropriately to questions presented by humans. This method of preparing the LLM is ground-breaking since it goes beyond merely teaching the LLM to anticipate the following word.

Developers behind ChatGPT sought the help of third-party raters known as “labelers” to evaluate GPT-3 and the brand-new InstructGPT before releasing them to the public. According to the research article, the outcomes for InstructGPT were positive.

However, it was also stated that there remained space for improvements. ChatGPT differs from other chatbots in that it was explicitly taught to grasp the human meaning behind a question and offer helpful, accurate, and harmless responses. As a result of this training, ChatGPT could scrutinize specific queries and eliminate illogical section sections of the questionable; another research study on ChatGPT details how the AI was trained to make judgments about people’s preferences.

The researchers discovered that the measures used to grade the outputs of natural language processing AI produced machines that performed well on the metrics but did not match what people wanted.

The researchers provided the following explanation of the issue:

Many machine learning applications optimize simple metrics, which are only rough proxies for the designer’s intent. This can lead to problems, such as YouTube recommendations promoting click-bait.

As a result, their idea was to develop an AI that could generate responses that were suited to what people wanted. To achieve this, they trained the AI using datasets of human comparisons among various reactions, such that the system grew better at identifying what humans consider good answers. According to the research, training was conducted by summarising Reddit postings and evaluated on summarising news.

Are there disadvantages of ChatGPT?

Directions Affect Answer Quality

One significant disadvantage of ChatGPT is that the output quality is dependent on the input quality. In other words, professional instructions (prompts) provide excellent results.

Answers Aren’t Always Accurate

Another disadvantage is that because it has been trained to deliver responses that feel appropriate to humans, the replies may deceive humans into believing that the output is correct.

Incorrect Responses

ChatGPT is exceptionally engineered not to respond in a harmful or damaging manner. As a result, it will avoid answering such inquiries. Many users observed that ChatGPT could give incorrect responses, some of which are radically wrong. The moderators of the coding Q&A website Stack Overflow may have identified an unforeseen effect of human-like reactions.

Stack Overflow was inundated with user responses created by ChatGPT that looked right, yet many were incorrect. The volunteer moderator crew was overwhelmed by the hundreds of replies, causing administrators to prohibit any users who posted answers created by ChatGPT.

The developers of ChatGPT, OpenAI, are aware of this and cautioned against it in their introduction of the new technology. Stack Overflow moderators have seen incorrect ChatGPT responses that appear correct in the past.

OpenAI Clarifies ChatGPT’s Disadvantages

The OpenAI announcement included the following remark:

ChatGPT occasionally writes plausible-sounding but erroneous or illogical responses. Trying to fix this problem is difficult because:

- There is presently no source of truth during RL training;

- When the model is trained to be more cautious, it declines inquiries that it can accurately answer;

- Since the optimal response is dependent on the model’s knowledge rather than the human demonstrator’s, supervised training misleads the model.

Is it free to use ChatGPT?

ChatGPT is presently available for free during the “research preview” period. The chatbot is available for people to test out to help the AI become more competent at answering queries and learning from its failures.

According to the official statement, OpenAI is very interested in receiving criticism on the errors:

While we’ve made efforts to make the model refuse inappropriate requests, it will sometimes respond to harmful instructions or exhibit biased behavior. We’re using the Moderation API to warn or block certain types of unsafe content, but we expect it to have some false negatives and positives. We’re eager to collect user feedback to aid our ongoing work to improve this system.

An ongoing contest offering five hundred dollars worth of ChatGPT credits as a reward is designed to get the general public to rate the answers. Users are invited to submit comments on troublesome model outputs and false positives/negatives from the external content filter, which is also included in the interface.

We are primarily focused on feedback on potentially detrimental outcomes that may occur in real-world, non-adversarial settings, as well as feedback that assists us in identifying and understanding novel hazards and potential mitigations. If you want to try your luck at winning up to $500 in API credits, you may enter the ChatGPT Feedback Contest. All submissions must be made using the ChatGPT interface’s associated feedback form.