Future Technological Advances That Will Shape the Next Decade

Top 3 Takeaways

AI, 5G, and smart tech are already changing daily life—whether we notice or not.

Businesses that integrate automation, data, and conversational AI now will stay ahead.

The next decade isn’t about knowing it all—it’s about being ready to shift when tech does.

As global powers shift, and we’re all sitting around talking about what’s next—you know what keeps coming up?

Technology.

Not just new gadgets, but real changes. Stuff that’s already starting to affect how we work, shop, get around, and even talk to each other.

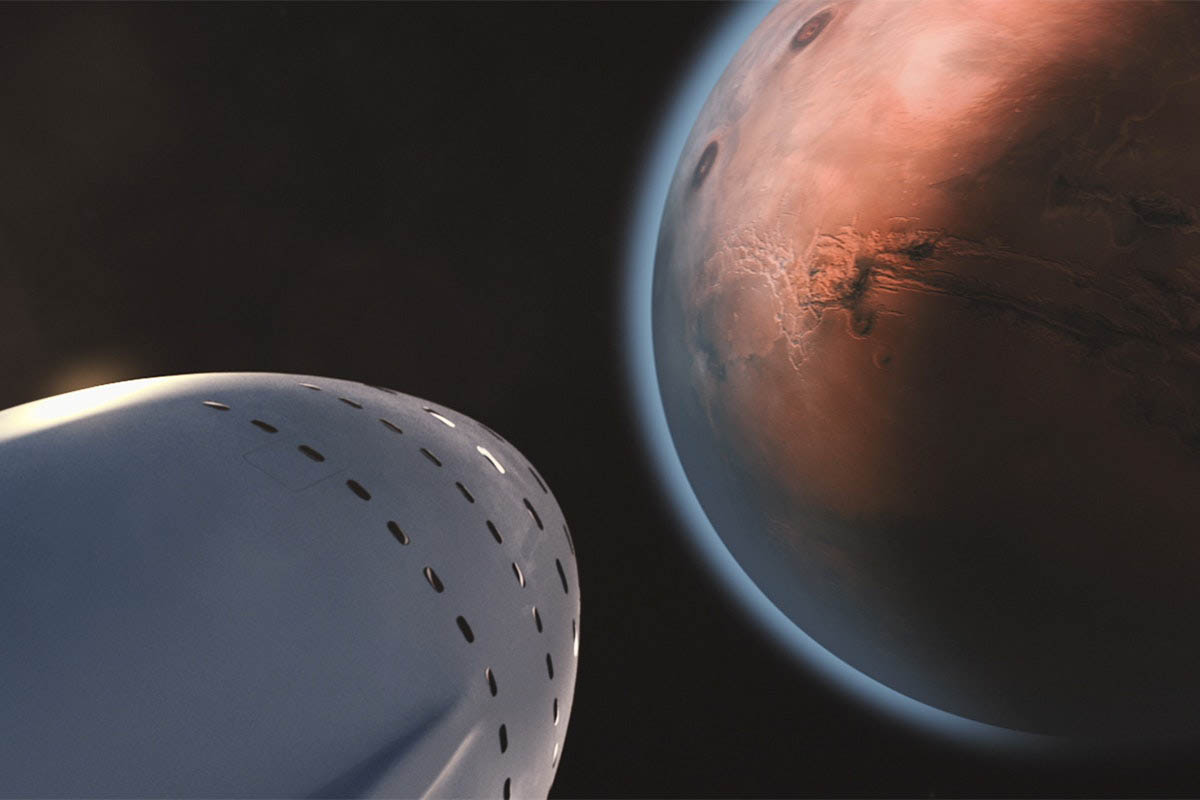

We’re not talking flying cars yet (or living on Mars), but the next 10 years?

They’re going to look really different.

And whether we’re ready or not, it’s already happening.

We’ve been hearing about AI, 5G, automation. But what does that actually mean for us, for businesses, for daily life?

Here’s what we’ve been thinking—and what the numbers are showing.

If you are interested in knowing how to implement some of these future-forward technologies in your business today, consider getting in touch with the experts at IT Consulting Houston.

Marketing Tech: Changing Fast, but Still About Human Needs

When I think about the future of consumption and what marketing technologies could offer… honestly?

I get a little scared.

Not because I don’t love innovation. But because I wonder—how far can marketing go before it stops asking for attention and just takes it?

It reminds me of The Dark Forest by Liu Cixin, the second book in The Three-Body Problem trilogy.

There’s this moment when the main character, Luo Ji, wakes up 200 years into the future.

He steps outside into a world so different, and what hits him first isn’t the buildings or tech—it’s the ads.

Ads everywhere. Projected in the sky. On glass walls. Floating above streets.

The ads follow him as he walks, adapting to him, reading him. They’re not random—they’re personal.

Marketing in that future isn’t just everywhere—it’s inescapable.

And honestly, when you look at where we’re heading with AI, augmented reality, and tools like Neuralink experimenting with brain-computer connections… that world doesn’t feel like fiction anymore.

We’re already living in a time where ads follow us from one app to another. Where algorithms predict what we’ll want before we search.

Imagine that scaled up—ads not on screens, but woven into your environment, your entertainment, even your thoughts.

(Black Mirror captured this too—in the episode where a woman has a chip implanted after an accident, and suddenly ads start playing directly into her mind unless she pays to upgrade or silence them.)

It sounds dystopian, right? But it’s also…possible.

Because even today, marketing is pushing deeper—not just into our attention spans, but into our emotions, our needs, our fears.

And yet, even with all this tech, one thing hasn’t changed:

The companies that win are still the ones that understand people.

The brands investing in sociology, market research, and reaching real pain points.

Because it’s not tech that moves people—it’s storytelling.

It’s empathy.

It’s meeting someone in a moment of need, and offering something that feels like an answer—not a sales pitch.

In a world where ads might someday surround us—on every surface, inside every interaction, maybe even inside our heads—the brands that stay human, that connect with real stories, will be the ones people trust.

Tech will keep evolving. Tools will keep shifting.

But storytelling? Connection? Understanding what really matters?

That’s timeless.

AI Will Be the New Voice We Interact With

It’s wild to think about, but by next year, AI is expected to handle over half of all human-computer interactions.

We’re already talking to Alexa and Siri—but soon, AI won’t just answer questions. It’ll book things, write content, run customer service, train employees.

✅What this looks like for us:

We’ll talk to tech more than we type.

Businesses that don’t build conversational AI into their customer experience? They’ll start to feel outdated.

Smart Factories Are Taking Over

By 2025, more than 50 billion devices will be connected to the Industrial Internet of Things (IIoT).

That means factories, machines, trucks, and robots all talking to each other—sharing data, predicting issues, fixing themselves.

✅What this looks like for us:

Faster shipping. Products that don’t run out. Companies that pivot quicker because their systems are smart.

Virtual and Augmented Reality

Virtual reality is a computer-generated simulation of a 3D environment, while augmented reality adds an overlay to the user’s real world. Both technologies are growing in popularity and are expected to be major drivers of innovation over the next decade.

Virtual reality (VR) is a fast-growing market that’s poised for explosive growth in the coming years. In just three years, sales of VR headsets have grown from $1 million to $3 billion globally, according to Gartner research.

Goldman Sachs estimates that the virtual and augmented reality industry could reach a market size of $80 billion market by 2025.

Augmented reality (AR) also has its share of impressive stats: IDC expects augmented reality spending on mobile devices alone will exceed $60 billion between 2017 and 2022.

Data Is Growing Like Crazy

We’re making data like never before. By the end of the decade, the world will generate over 79 zettabytes of data a year (yep, a zettabyte is a billion terabytes).

Every tap, swipe, upload, purchase—it’s all data. And managing it will be a huge challenge and opportunity.

✅What this looks like for us:

More personalized everything—but also bigger questions about privacy and how our info’s being used.

For businesses, using data well (and responsibly) will make or break them.

Cloud Computing: Powering Everything, Everywhere

Cloud computing is basically the idea of running software and storing data somewhere else—on servers you access over the internet, instead of keeping everything on your own computer or company hardware.

It’s how we stream movies, back up photos, share documents, and run business apps without needing giant storage drives or expensive servers at home or in the office.

For businesses, the shift to cloud means buying tech like a service (think “pay-as-you-go”) instead of buying and maintaining their own data centers.

This lets them scale up or down, access their systems from anywhere, and cut costs on equipment and IT staff.

Today, most companies aren’t choosing just one cloud anymore. By now, over 80% of businesses are using hybrid-cloud or multi-cloud setups—mixing public, private, and on-premise solutions to fit their needs.

This trend keeps growing as businesses spread out their data and apps across different platforms for flexibility and security.

Why do so many switch?

✅ Lower upfront costs;

✅ Less maintenance;

✅ More flexibility to scale;

✅ More reliability, with backups across regions.

And here’s what’s even more exciting: cloud computing is setting the stage for the next wave of innovation—quantum computing.

Microsoft is leading the charge with its Azure Quantum platform, giving developers and researchers access to early quantum computing tools through the cloud.

While quantum computers aren’t replacing classical computers yet, the fact that you can already experiment with quantum algorithms via cloud services shows how fast things are moving.

In the next few years, cloud + quantum could unlock solutions for industries like healthcare, finance, and energy—solving problems too complex for traditional computers.

In short, cloud computing isn’t just about offloading storage. It’s becoming the backbone for everything from AI to quantum experiments to the apps we use every day.

Internet of Things (IoT): Connecting Everything Around Us

The Internet of Things (IoT) isn’t just about smart home gadgets anymore—it’s the network that connects everything from your car to your fridge to industrial machines in factories.

Basically, IoT means physical devices (like vehicles, appliances, wearables, even buildings) are embedded with sensors, software, and other tech so they can collect, send, and even act on data.

These devices talk to each other over the internet, creating a connected system that can be monitored, adjusted, or automated from anywhere.

Here’s what this looks like in real life:

✅ Your thermostat adjusting itself based on your schedule.

✅ Sensors tracking machine performance in a factory to prevent breakdowns.

✅ Delivery trucks rerouting in real time based on traffic updates.

Right now, experts estimate there are over 15 billion connected IoT devices worldwide (much higher than just a few years ago).

And by 2035, some forecasts expect that number to explode past 100 billion devices globally.

We’re also seeing huge growth in Industrial IoT (IIoT)—where this tech is powering smarter factories, warehouses, farms, and energy grids.

If you’re wondering how IoT could be used in your specific industry—whether it’s retail, healthcare, logistics, or manufacturing—it’s worth exploring custom solutions that fit your goals. (A tech consultant can help map that out.)

The takeaway? IoT isn’t just about connecting devices.

It’s about using data from those devices to work smarter, safer, and more efficiently—at home and at scale.

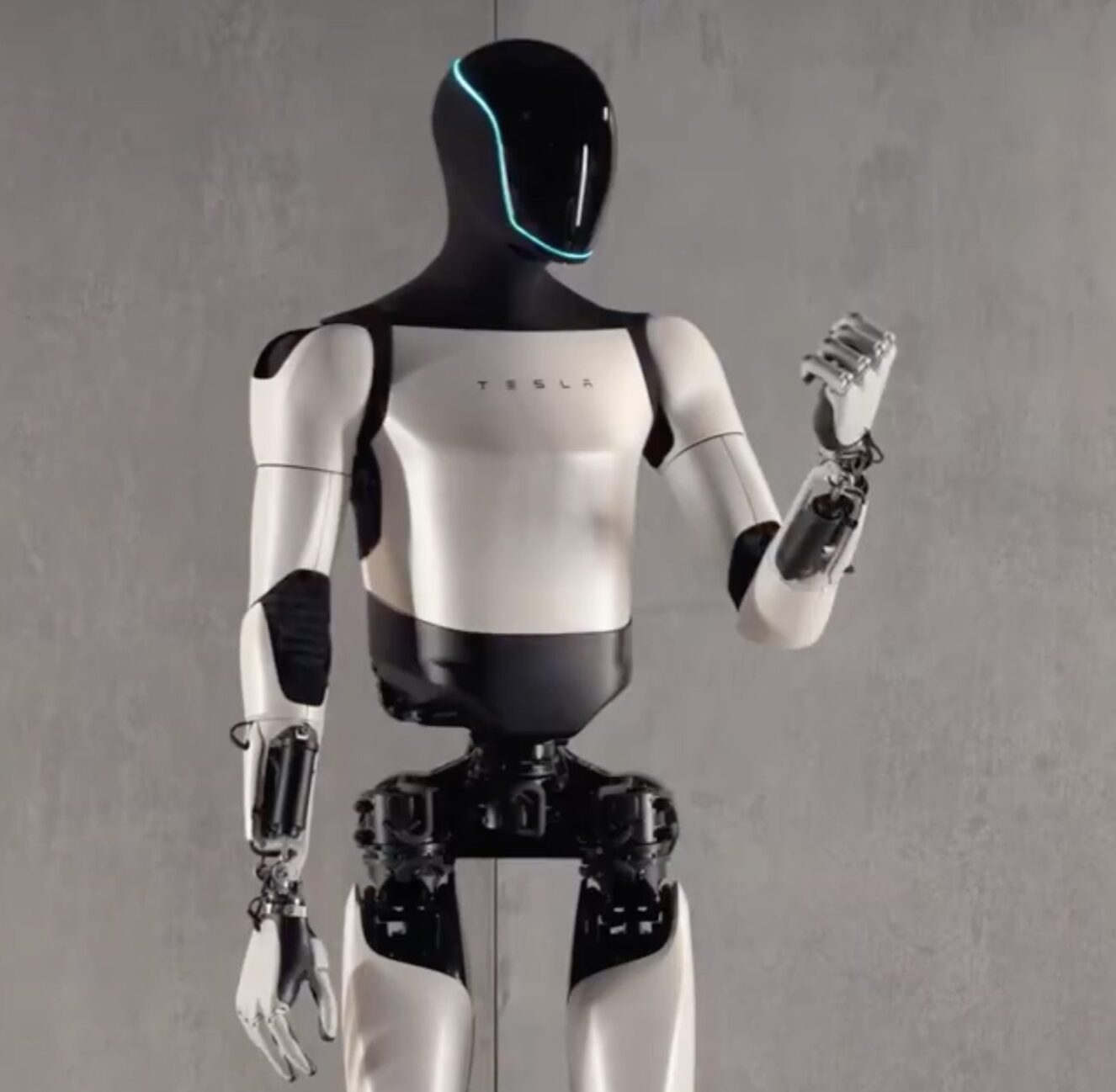

Advanced Robotics: From Factories to Everyday Life

Robots aren’t just for factories anymore.

Over the past few years, advanced robotics has moved into homes, hospitals, restaurants, warehouses, and even military operations.

Sure, robots have been doing repetitive factory work for decades. But today? Their skills have leveled up.

Now we’ve got robots that:

✅ Deliver packages across cities;

✅ Flip burgers and make coffee;

✅ Assist surgeons in delicate operations;

✅ Clean airports and public spaces;

✅ Provide care and companionship in senior living centers.

In 2025, we’re seeing robotics blend with AI, machine vision, and machine learning—which means robots aren’t just following instructions.

They’re learning, adapting, and making decisions in real time.

Elon Musk humanoid robot, Optimus, is one of the most talked-about examples. First revealed in 2021 and still in development, Optimus aims to handle repetitive, dangerous, or boring tasks—anything from assembly-line work to household chores.

Musk envisions a future where robots like Optimus could assist in elder care, logistics, or even personal errands.

Other companies are jumping in too:

Starbucks testing robot baristas;

Amazon scaling up warehouse robots;

Tesla integrating robotics into its factories and autonomous systems.

In healthcare, surgical robots like the da Vinci system are now standard in many hospitals, enabling minimally invasive procedures with greater accuracy.

And in the military? Drones, autonomous vehicles, and AI-powered surveillance robots are becoming part of defense strategies.

What’s next?

Experts expect robots to play even bigger roles in elder care, healthcare, delivery, agriculture, and construction—filling gaps as industries automate to stay efficient.

✅The takeaway: robots aren’t here to replace people—they’re augmenting human work, handling the repetitive, dangerous, or ultra-precise tasks so humans can focus on what needs creativity, empathy, and judgment.

Advanced robotics isn’t sci-fi anymore. It’s already happening—and it’s growing fast.

Biometric Technology

Biometric technology uses unique, measurable human characteristics to identify an individual.

Biometric systems have been around for a long time, but they’re becoming increasingly important as digital technology infiltrates every aspect of our lives.

The most common forms of biometrics include fingerprint recognition and face recognition.

✅But there are many other types: iris recognition (eye shape), retina scan (blood vessels in the eye), voice recognition, retinal scan (retina patterns), vein pattern recognition (veins visible through skin), DNA recognition…and even facial thermographs that measure temperature differences between cheekbones on each side of the face.

One or more types of biometric authentication will come to the fore as password-based authentication is phased out to counter rising digital threats.

So What Should We Do Now?

It’s not about jumping on every tech trend.

But staying curious? Trying new tools?

Looking ahead instead of reacting too late? That’s key.

Whether you’re running a business or just figuring out your next career move, here’s what might help:

✅ Try AI tools for writing, customer service, marketing.

✅ Look at automation to save time or cut costs.

✅ Explore IoT solutions if you’re in retail, healthcare, logistics.

✅ Learn about data—how it’s collected, used, protected.

The next decade will reward people and businesses that stay adaptable, open, and willing to evolve.